Benchmarks for Evaluating Performance of Recommender System Architectures

Justin J. Levandoski, Michael D. Ekstrand, Michael J. Ludwig, Ahmad Eldawy, Mohamed F. Mokbel, and John T. Riedl. 2011. RecBench: Benchmarks for Evaluating Performance of Recommender System Architectures. In Proceedings of the VLDB Endowment. Seattle, (August 2011), 911-920.

Justin J. Levandoski, Michael D. Ekstrand, Michael J. Ludwig, Ahmad Eldawy, Mohamed F. Mokbel, and John T. Riedl. 2011. RecBench: Benchmarks for Evaluating Performance of Recommender System Architectures. In Proceedings of the VLDB Endowment. Seattle, (August 2011), 911-920.

Abstract

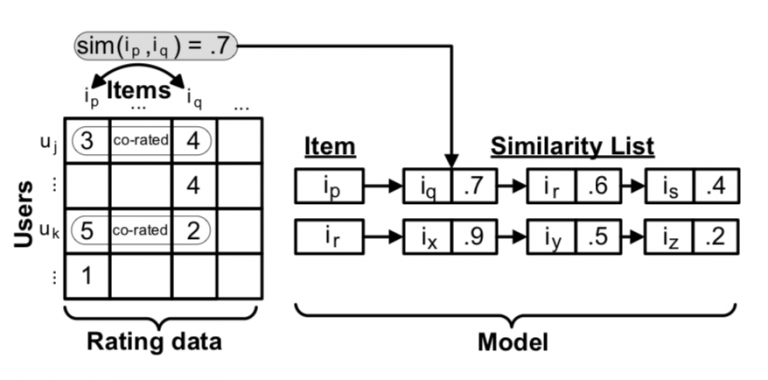

Traditionally, recommender systems have been “hand-built”, implemented as custom applications hard-wired to a particular recommendation task. Recently, the database community has begun exploring alternative DBMS-based recommender system architectures, whereby a database both stores the recommender system data (e.g., ratings data and the derived recommender models) and generates recommendations using SQL queries. In this paper, we present a comprehensive experimental comparison of both architectures. We define a set of benchmark tasks based on the needs of a typical recommender-powered e-commerce site. We then evaluate the performance of the “hand-built” MultiLens recommender application against two DBMS-based implementations: an unmodified DBMS and RecStore, a DBMS modified to improve efficiency in incremental recommender model updates. We employ two non-trivial data sets in our study: the 10 million rating MovieLens data, and the 100 million rating data set used in the Netflix Challenge. This study is the first of its kind, and our findings reveal an interesting trade-off: “hand-built” recommenders exhibit superior performance in model-building and pure recommendation tasks, while DBMS-based recommenders are superior at more complex recommendation tasks such as providing filtered recommendations and blending text-search with recommendation prediction scores.